Will AI Replace Medical Writers?

Table of Contents

1. Quick Answer

2. A Specialist’s Honest Answer

3. What is Creative Medical Writing?

4. What AI Can & Cannot Do in Creative Medical Writing

5. AI + Human Collaboration: The Best of Both Worlds

6. Future of AI in Medical Writing: Which Way Forward?

7. Take Home Message

Quick Answer

A Specialist's Honest Answer

Barely a week goes by without someone — a colleague, a relative, a LinkedIn connection — asking me some version of the same question: “Hasn’t AI replaced you in content writing?”

Let me be direct: AI will not replace skilled medical writers, but it will absolutely replace writers who refuse to evolve.

That distinction matters enormously for your career, for patient safety, and for the future of healthcare communication. But most discussions around this topic default to regulatory documentation or clinical trial writing.

This blog explores another space where the debate is becoming just as relevant: AI at the intersection of medicine, patient education and digital marketing.

So the real question becomes: “Will AI replace ‘creative’ medical content writing? “

My answer may not be what you expect.

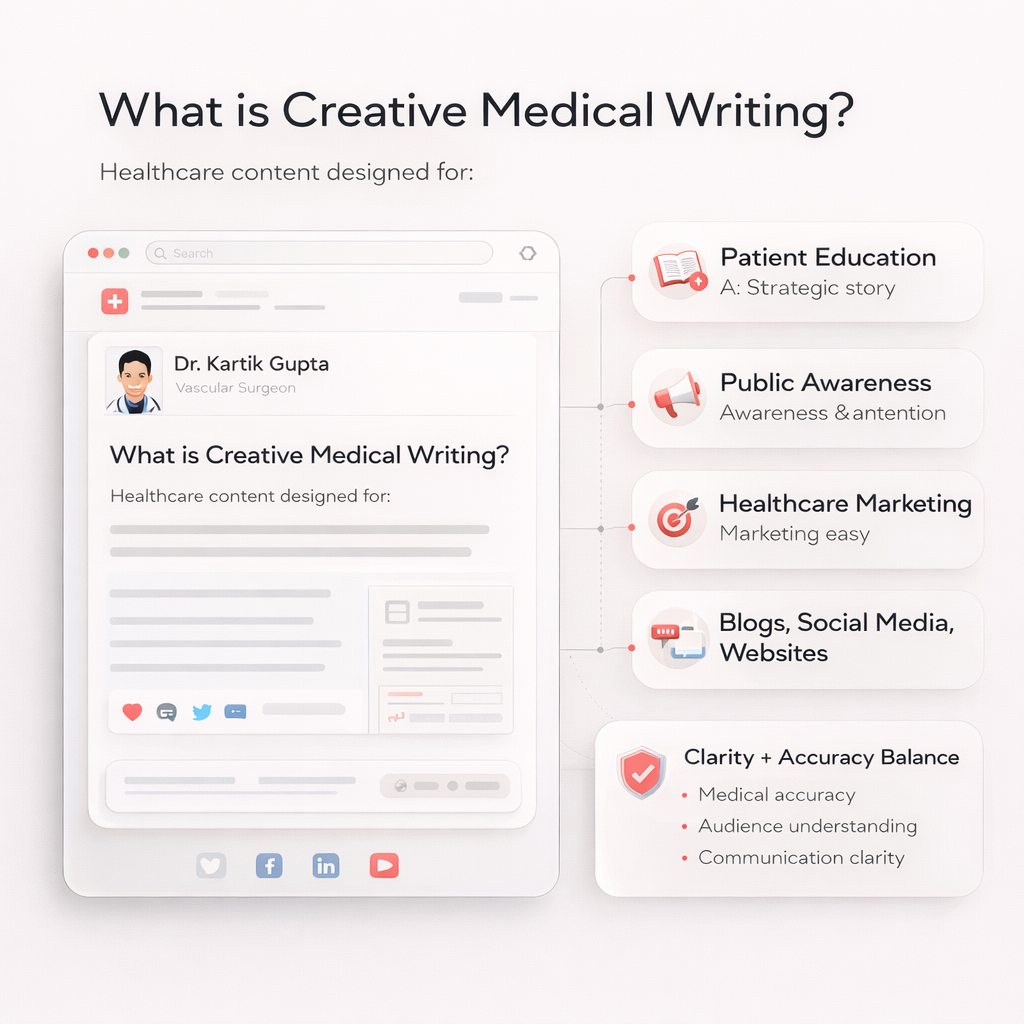

What is Creative Medical Writing?

Creative medical writing here refers to healthcare content created for patient education, marketing, or public awareness, including blogs, social media content, educational articles, learning materials and website copy.

What AI Can & Cannot Do in Creative Medical Writing

AI-only Tasks | Human + AI Collab Tasks | Human-only Tasks |

High-volume content generation | Simplifying complex scientific data for audience | Fact-checking and source accuracy |

Creative editing & regulatory formatting | SEO & KW research: brainstorming & strategy | Identifying missing context and removing bias |

Summary, compilation and drafting outlines | Content repurposing across formats | Developing a unique voice & perspective on the topic |

Content repurposing | Structuring long-form content | Maintaining a consistent voice and tone |

Where AI helps:

- Churning out large volumes of content quickly

- Summarising and compiling scattered information

- Paraphrasing or simplifying complex scientific data for a layman

- Editing across long-form content, including grammar checks

- Drafting an outline that acts as a scaffold for your actual writing

Where AI falls short:

- Finding sources – AI fabricates citations more often than you’d expect; always verify manually

- Maintaining tone – AI lacks personality, which is why the writing often feels robotic and inconsistent

- Knowing what’s missing – the most dangerous errors in medical writing are absent disclaimers and wrong statements

Here’s an overview of how AI actually performs across the core tasks of medical content:

Criteria/Tasks | AI Tools |

High-volume content generation | Fast and efficient |

Drafting outlines & structure | Gives a scaffold to build on |

Grammar & consistency checks | Efficient |

Simplifying complex science | Provides an acceptably simplified summary |

Fact-checking & source accuracy | Fabricates – always verify manually |

Creativity & originality | Can suggest plagiarised or recycled ideas |

Storytelling & narrative flow | Inconsistent – often breaks mid-piece |

Audience adaptability | Adapts well with the right prompt |

Maintaining a distinct voice | Fumbles without consistent training |

Writing for patient empathy | Sometimes too empathetic, becomes elaborate and loses track |

SEO & marketing | Efficient, but loses contextual relevance if not supervised |

Medical accuracy & disclaimers | Not reliable |

In my observation, AI functions best as a productivity tool in medical writing while human writers remain responsible for verifying evidence, ensuring accuracy, and providing clinical context.

Here’s what the human-AI collaboration actually looks like in practice.

AI + Human Collaboration: The Best of Both Worlds

Generative AI in healthcare has made it easier to draft, summarise, and organise information at scale. But when medical writers collaborate with AI tools, they review and refine that output to ensure the final content is scientifically sound and responsibly communicated.

Their role typically involves:

- Interpreting clinical data and research outcomes

- Translating complex science into clear, audience-appropriate language

- Maintaining data privacy, regulatory, and ethical standards

- Structuring information to guide the reader’s understanding

- Ensuring medical accuracy while avoiding over-interpretation

Why Creative Medical Writing Needs Human Judgement

At first glance, AI tools look impressively capable. They can generate paragraphs in seconds and summarise dense scientific content with minimal prompts.

But medical content falls under YMYL (Your Money Your Life) — meaning the information can directly affect people’s health decisions. Even Google treats health information with extra scrutiny because, quite literally, lives are at stake!

When medical information is simplified for patient education, especially through short-form content such as social media, the risk increases.

Fewer words fail to explain the context and can easily lead to misinterpretation, misinformation, or unsafe health decisions.

This is why E-E-A-T in healthcare content (Experience, Expertise, Authoritativeness, and Trustworthiness) is not just an SEO framework but a patient safety standard.

5 Reasons Why AI Won't Replace Medical Writers

The hype is real, but the data in 2026 suggests otherwise.

A survey published by EMWA in 2025 (n=106, 21 countries) found that 40.6% disagreed outright that AI could replace human writers — and this was a population already using the tools.

To understand more, let’s look at the 5 most important limitations of AI in medical writing.

#1 Hallucinated References

AI systems frequently fabricate sources, citations or non-existent research papers that appear convincing but do not actually exist.

This issue has been documented in various studies evaluating AI tools in academic and medical writing.

Since creative medical content — particularly short-form content on social media — is widely shared and forwarded, it becomes even more important to manually verify every reference before publishing.

#2 Lack of Clinical Reasoning

AI tools & LLMs like ChatGPT/Gemini are trained on vast datasets and can mimic human writing to produce coherent text. They predict the most likely sequence of words based on patterns in their training data without understanding clinical reasoning.

This is also why AI sometimes produces irrelevant or incomplete answers when asked highly specific medical questions that are not widely discussed online.

In creative writing, this means that writers who rely on AI for research/brainstorming will have to manually scour sources to verify context, identify gaps, and determine what is actually relevant to their target audience.

Newer models are increasingly being developed to evaluate multiple scenarios to address this limitation.

#3 Errors of Omission

In medical content, what is missing from a piece can be more dangerous than what is written. For example, safety disclaimers, incomplete explanation of side effects, etc.

A recent publication on AI incidents in healthcare, has reported the impact on patient safety, further emphasising the risks.

A 2025 Cornell study says LLMs have started omitting safety disclaimers, gradually shifting the onus onto readers/users.

Possible reasons for such omissions include:

- Training data bias and gaps: Many AI systems are trained on datasets that underrepresent certain populations or clinical scenarios.

- Black-box decision processes: Complex algorithms make it difficult to interpret how conclusions are generated or why risk factors are overlooked.

- Overfitting: AI may learn irrelevant associations between patient features and outcomes, focusing on patterns that are not clinically meaningful.

- Limited real-world validation: Systems that perform well in controlled research environments may fail to capture complications or side effects in real patient populations.

- Publication bias: Scientific literature often reports successful outcomes more frequently than negative or inconclusive findings, limiting balanced training data.

This misleads the readers if the content is not reviewed by someone with subject expertise and contextual understanding.

#4 Lack of Ethical Responsibility

When AI generates medical content, it does not take responsibility for the information it produces. If that information turns out to be incorrect or misleading, who is accountable?

In content creation, the responsibility ultimately falls on the author, organisation, or the doctor publishing the content, who otherwise has no role in the algorithm development.

Since content writing involves feeding raw data/information to the tool, there are concerns about data privacy. Without careful oversight, the use of AI tools in medical content could unintentionally expose confidential or proprietary information.

#5 Lack of Clear Guidelines and Regulations

The world is still catching up with the rapid growth of AI.

While several organisations are exploring frameworks, global guidelines on ethical & legal implications are still evolving, and at present, there is no universal agreement on:

- Where AI can be safely used in medical writing

- How AI-generated content should be reviewed

- Who is responsible for verifying accuracy

For a clinician publishing content under their name and credentials, this ambiguity has direct consequences.

Until stronger regulations and best-practice guidelines are established, human oversight remains indispensable for the use of AI in all forms of content writing.

Future of AI in Medical Writing: Which Way Forward?

The consensus among industry experts, researchers, and practitioners is clear: AI is not replacing the medical content writer, but the role is evolving. AI is restructuring it.

For creative medical content, here is where I see the role heading:

- From writing to directing: The day-to-day work will shift from generating every word to reviewing and refining AI output to ensure accuracy.

- From execution to strategy: AI will empower professionals to focus on clarity and compliance. For creative content, this means the medical writer will define the brand voice, writing tone, content architecture, and content strategy while AI churns out content.

As more healthcare content online is produced by AI, an emerging trend worth watching will be ‘model collapse’: a phenomenon where algorithms trained on AI-generated data gradually degrade in quality.

Future models risk learning from synthetic rather than authentic human-generated data. This will make the human voice in medical content a quality differentiator.

Clear headings, short paragraphs, and logical flow make medical information easier for patients to understand and for search engines to crawl, index, and interpret.

Take Home Message

Will AI replace medical writers? No. Your clinical judgment, your voice, and your ability to know what is missing are not features an algorithm can replicate.

The medical writers who thrive in the next decade will be those who learn to incorporate AI into their workflows and collaborate with it.

Use it to work faster and not to think for you. Think of it like using the elevator instead of the stairs.

Looking to create healthcare content that patients trust and search engines reward? Let’s connect.