Limitations of AI: 7 Reasons AI Won't Replace Medical Writers

Table of Contents

Quick Answer

So AI cannot replace medical writers and here are the limitations of AI that explain why:

- Hallucinated references: AI fabricates citations that sound real but don’t exist

- No clinical reasoning: It predicts words, not medical context

- Errors of omission: Safety disclaimers and side effects get dropped

- No accountability: AI takes no responsibility for wrong information

- No regulations yet: Global guidelines on AI in medical writing are still evolving

- Lack of originality: Outputs are pattern-based, not strategically crafted

- Skill erosion: Over-reliance weakens a writer’s core clinical judgment

If you’ve been on LinkedIn lately, you’ve probably seen some version of this claim: “AI is replacing writers.”

And to be fair, in some industries, that’s already happening. But in medical writing, not quite.

But AI is getting this massive push, and people are calling it PATHBREAKING, especially in healthcare. So then, how does AI fit into the whole scenario?

Think of AI as an extremely efficient intern who can summarise information, draft content, and organise ideas in seconds. But would you trust an intern for patient counselling?

That’s essentially what we’re asking when we talk about AI replacing medical writers.

And even those closest to the technology aren’t fully convinced.

So the real question is: why hasn’t AI replaced medical writers yet?

To understand that, let’s look at the 7 most important limitations of AI in medical writing.

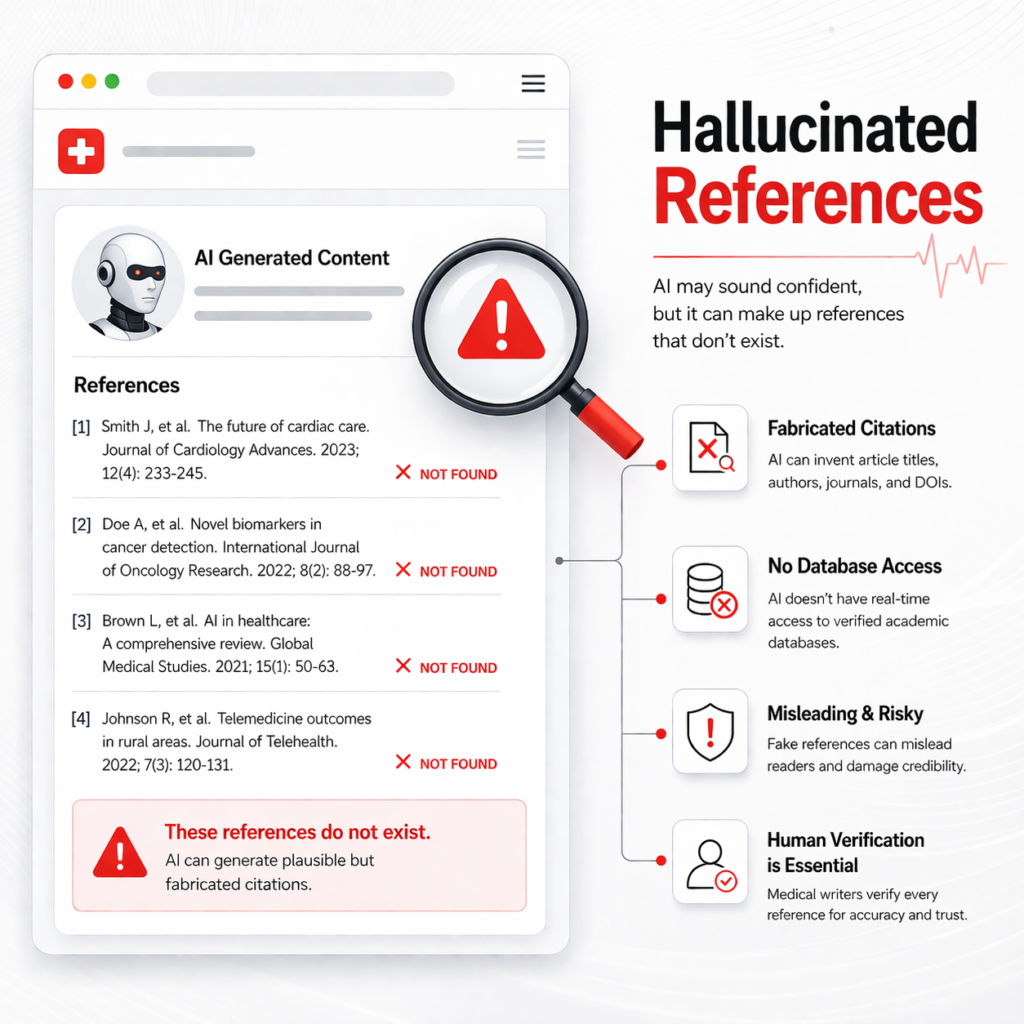

#1 Hallucinated References

AI systems frequently fabricate sources, citations or non-existent research papers that appear convincing but do not actually exist.

This issue has been documented in various studies evaluating AI tools in academic and medical writing.

Since creative medical content — particularly short-form content on social media — is widely shared and forwarded, it becomes even more important to manually verify every reference before publishing.

#2 Lack of Clinical Reasoning

AI tools are trained on vast datasets and work basically by mimicking human writing to produce coherent text. They predict the most likely sequence of words based on patterns in their training data without understanding clinical reasoning.

This is also why AI often produces irrelevant or incomplete answers when asked highly specific medical questions that are not widely discussed online.

In creative writing, this means that writers who rely on AI for research/brainstorming will have to manually scour sources to verify context, identify gaps, and determine what is actually relevant to their target audience.

While newer models are being developed to address this, they remain inadequate for the contextual demands of medical communication.

#3 Errors of Omission

In medical content, what is missing can be more dangerous than what is written, for example, safety disclaimers, incomplete explanation of side effects, etc.

A recent publication on ‘AI incidents in healthcare’ has reported the impact on patient safety, further emphasising the risks.

A 2025 Cornell study says LLMs have started omitting safety disclaimers, gradually shifting the onus onto readers/users.

Possible reasons for such omissions include:

- Training data bias and gaps: Many AI systems are trained on datasets that underrepresent certain populations or clinical scenarios.

- Black-box decision processes: Complex algorithms make it difficult to interpret how conclusions are generated or why risk factors are overlooked.

- Overfitting: AI may learn irrelevant associations between patient features and outcomes, focusing on patterns that are not clinically meaningful.

- Limited real-world validation: Models that perform well in controlled research environments miss the side effects in real patients.

- Publication bias: The scientific literature favours successful outcomes over negative or inconclusive findings, limiting the availability of balanced training data.

This can mislead the readers if the content is not reviewed by someone with subject expertise and contextual understanding.

#4 Lack of Ethical Responsibility

When AI generates medical content, it does not take responsibility for the information it produces. If that information turns out to be incorrect or misleading, who is accountable?

In healthcare content, responsibility ultimately falls on the author, organisation, or healthcare professional publishing it, who otherwise has no role in the algorithm development.

There are also growing concerns about data access & privacy. Many AI tools process user prompts on external servers, raising questions about how sensitive information is stored and used.

Without careful oversight, using AI tools in medical content could unintentionally expose confidential or proprietary information.

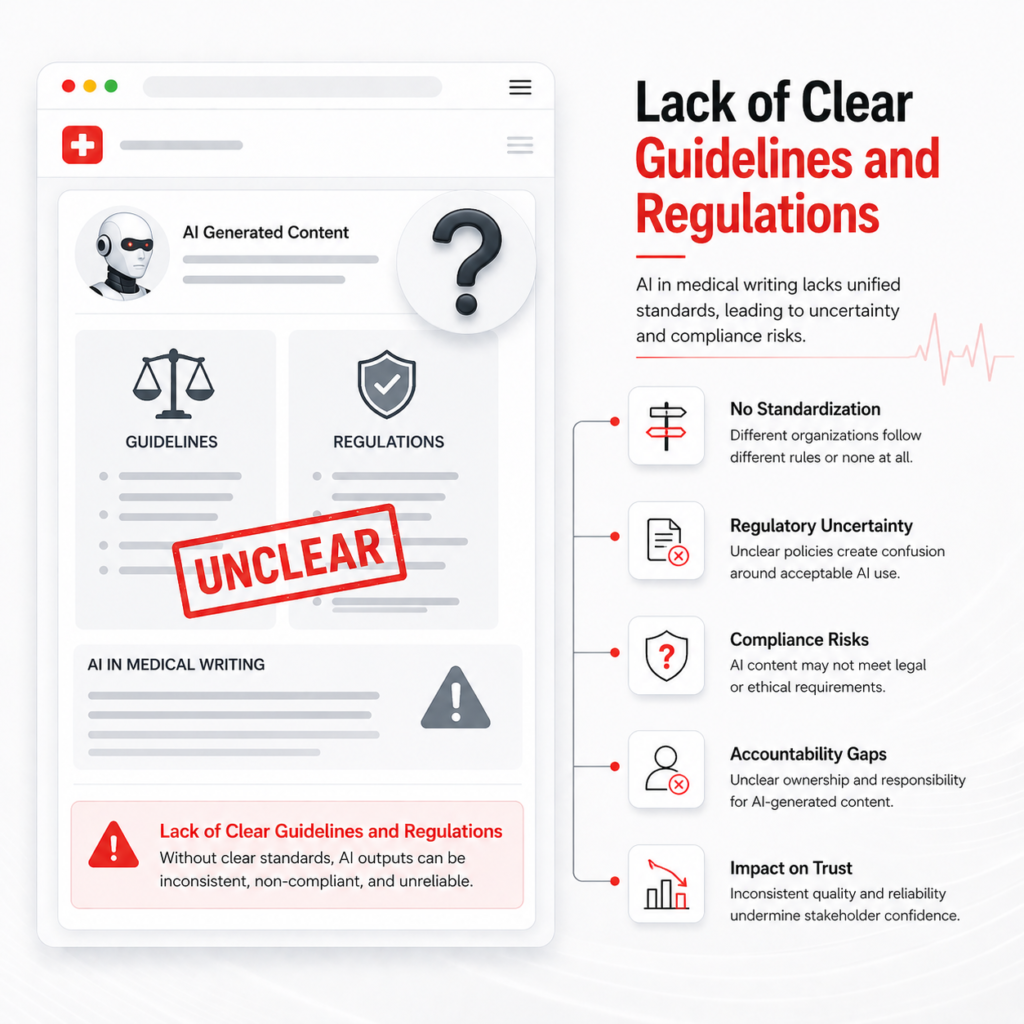

#5 Lack of Clear Guidelines and Regulations

The world is still catching up with the rapid growth of AI.

While several organisations are exploring frameworks, global guidelines on ethical & legal implications of AI are still evolving. At present, there is no universal agreement on:

- Where AI can be safely used in medical writing

- How AI-generated content should be reviewed

- Who is responsible for verifying accuracy

- How responses should be modified in exceptional cases/scenarios

Until stronger regulations and best-practice guidelines are established, human oversight remains indispensable for the use of AI in all forms of medical communication.

#6 Lack of True Creativity and Originality

AI works by identifying patterns from existing data. While that makes it efficient, it limits originality because most of it is built on what already exists online. This makes the writing repetitive, generic, and lacking in fresh perspective.

In medical writing, originality is about knowing which angle matters, what question the audience is actually asking, and how to explain it in a way that feels relevant.

That level of strategic thinking still comes from human judgment, not pattern prediction.

#7 Over-reliance and Loss of Skill

When AI starts doing most of the heavy lifting, there’s a tendency to accept outputs at face value rather than question them, refine content, or add deeper insight.

In medical writing, that’s a problem because the real value lies in interpreting information, identifying gaps, and applying clinical judgment.

Excessive dependence on AI for drafting gradually weakens the medical writer’s core skills, making the content technically accurate but weak in relevance, depth and reasoning.

Take Home Message

Medical Writers, Assemble!

Your judgment, your unique voice and personality cannot be replaced by an algorithm.

AI can support medical writers, but it cannot replace clinical reasoning, contextual understanding, or ethical responsibility.

Integrate AI into your workflow to boost efficiency and reduce human error. Think of it like using the elevator instead of taking the stairs!

The writers who will thrive are the ones who learn to work with AI, not depend entirely on it.

If you’re looking for healthcare content that is accurate, strategically written, and built for both patients and search engines, let’s connect.